Low-rank Bayesian weight matrix

The variational posterior is placed over the factors rather than directly over every entry of the full matrix.

Low-rank Bayesian neural networks with singular posterior geometry, reduced complexity scaling, and scalable uncertainty quantification.

We parameterize Bayesian neural network weights through low-rank factors $W = AB^\top$, inducing a singular posterior geometry concentrated on the rank-$r$ manifold. This reduces variational complexity from $O(mn)$ to $O(r(m+n))$ while maintaining competitive uncertainty-aware performance across MLPs, LSTMs, and Transformers.

Low-rank variational BNNs do not merely save parameters — they change the geometry, covariance structure, and complexity of the posterior.

End-to-end variational learning over low-rank factors $W = AB^\top$, trained from scratch without pretrained backbones.

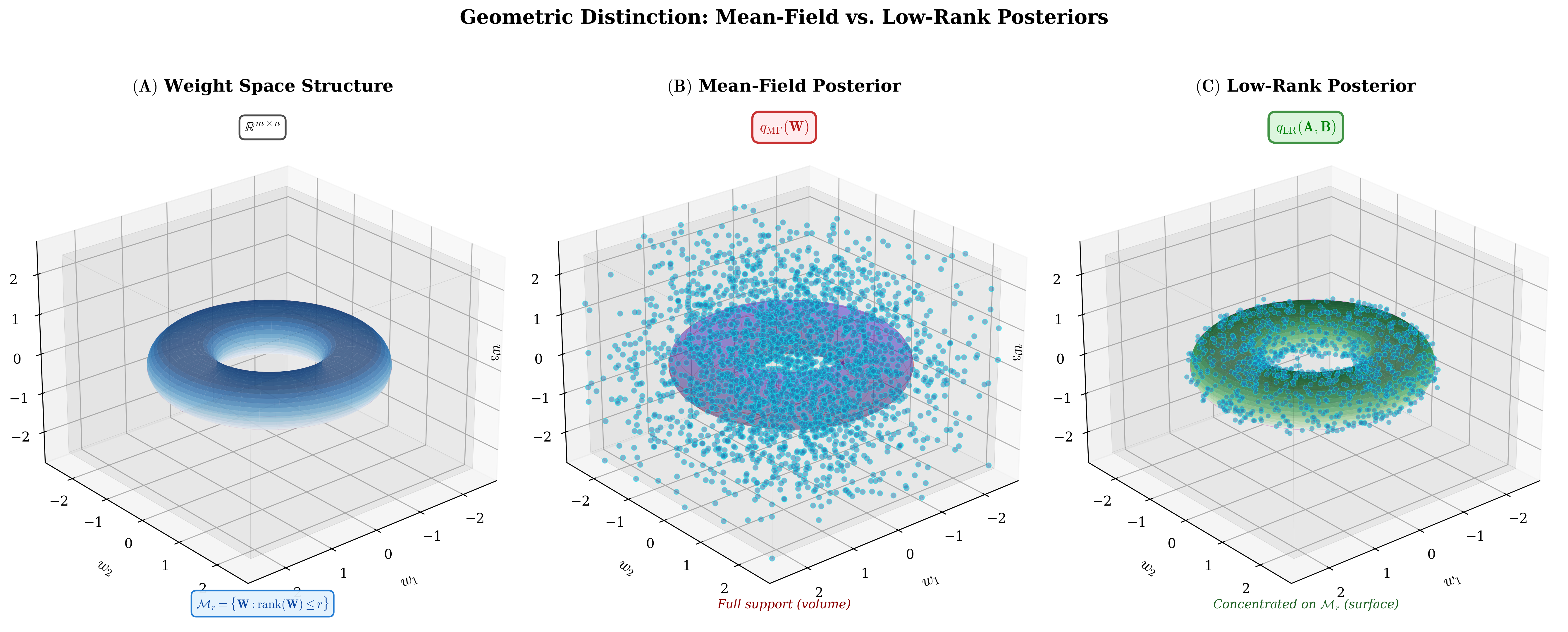

The induced posterior $q_W$ is a pushforward measure supported on the rank-$r$ manifold $\mathcal{R}_r \subset \mathbb{R}^{m \times n}$.

$q_W$ is singular with respect to Lebesgue measure on the ambient weight space.

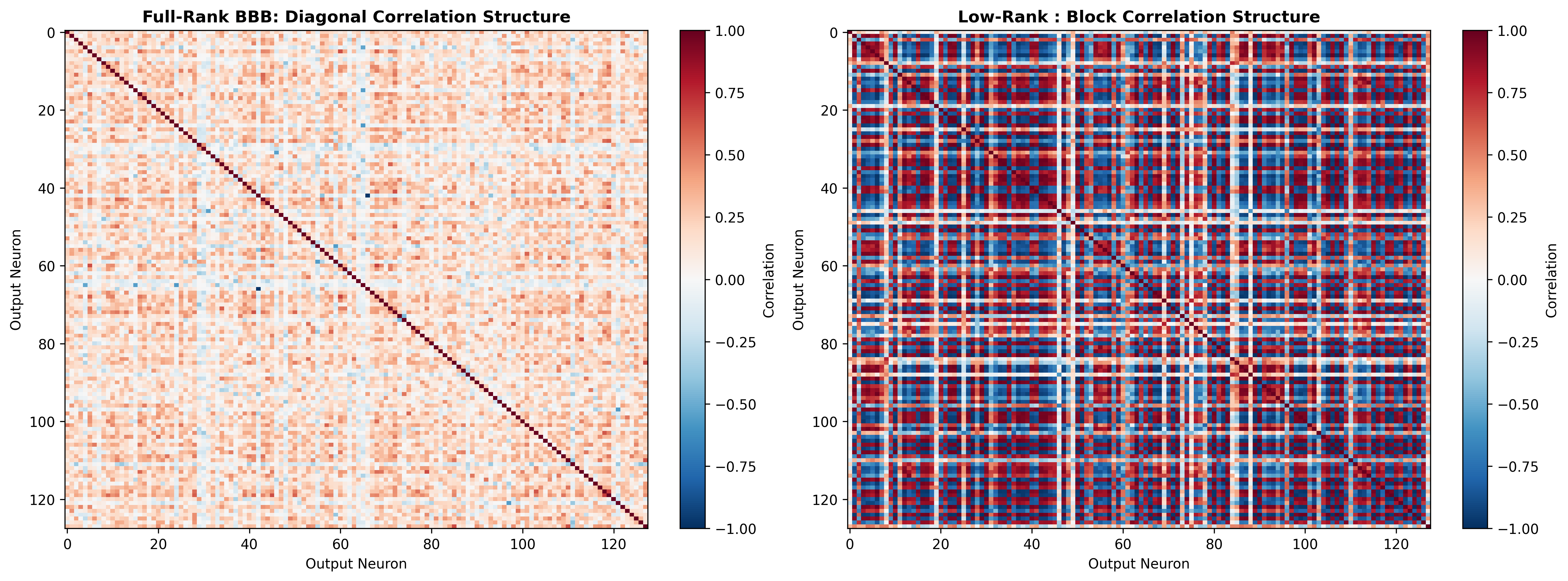

Mean-field factors over $A, B$ still induce structured, non-trivial covariance between entries of $W$ through shared latent dimensions.

Three certificates: approximation error (Eckart–Young–Mirsky), PAC-Bayes generalization bounds, and Gaussian complexity transfer.

Validated on MLPs (MIMIC-III), LSTMs (Beijing PM₂.₅), and Transformers (SST-2) with OOD evaluation on domain-shifted test sets.

Bayesian neural networks provide principled uncertainty, but full weight-space inference does not scale. Three obstacles block practical deployment.

Standard mean-field MFVI requires 2 variational parameters per weight, doubling the parameter count per layer, scaling as $O(mn)$ for a single matrix.

Fully factorized posteriors ignore structured correlations between weights, limiting expressiveness and the model's ability to represent epistemic uncertainty coherently.

Deep Ensembles, the practical gold standard, require 5 full model copies, making them prohibitively expensive for modern-scale architectures.

This paper is not LoRA with uncertainty pasted on top. The novelty is the induced posterior measure over $W$: its support, geometry, covariance, and complexity all change.

Standard Bayesian neural networks often rely on fully factorized posteriors that ignore structured correlations between weights. We instead introduce low-rank variational factors:

Although the factors themselves can be mean-field, the induced posterior over $W$ becomes highly structured, introducing correlations through shared latent factors. Optimized via the reparameterization trick (Bayes by Backprop) with Adam — implemented as drop-in Keras layer replacements for dense layers, LSTM gates, and Transformer projections.

A broad Gaussian ($\sigma_1^2=1$) and a narrow spike ($\sigma_2^2 \approx 0.002$): encourages sparse structure while allowing occasional large weights. Same prior for $B$.

Positivity enforced via softplus. Sampling is differentiable w.r.t. $(\mu_A,\rho_A)$, allowing gradients to flow through Monte Carlo ELBO estimates.

Each architecture requires one key adaptation. The variational layers are drop-in replacements for standard Keras layers.

Each dense layer $W_\ell \in \mathbb{R}^{d_\ell \times d_{\ell+1}}$ uses $W_\ell = A_\ell B_\ell^\top$ with its own rank $r_\ell$, tunable independently. No architecture-specific modifications needed — identical variational layers.

$W_{ih}$ and $W_{hh}$ are factorized. Following Fortunato et al. (2017), factors $A,B$ are sampled once per batch, $W = AB^\top$ is cached across all $T$ time steps, and KL divergence is computed once per sequence. Forget gate bias initialized to 1.0.

Q/K/V/FF projections use the same variational layers as MLPs. For the embedding $W_{emb} \in \mathbb{R}^{V \times d}$, only rows of $A$ corresponding to tokens in the current batch are sampled, reducing cost from $O(Vd)$ to $O(|U|r + dr)$ where $|U|$ is unique tokens.

GPU Profiling · SST-2 · Controlled single-device benchmark

All models trained for the same number of steps on the same GPU.

Low-Rank BBB: 13× fewer parameters than Full-Rank BBB, ~50% of the peak memory, and 3.2× faster per epoch than Deep Ensemble. Full Bayesian uncertainty at a fraction of the cost.

The paper's key move is to define a simple mean-field posterior in factor space, then study the induced posterior measure over the full weight matrix.

The variational posterior is placed over the factors rather than directly over every entry of the full matrix.

The factors may be mean-field, but the induced posterior over $W$ is not an independent posterior over weight entries.

This pushforward measure is the central object: it describes the posterior distribution over the actual weight matrix $W$.

When $r < \min(m,n)$, the posterior is supported on the rank-at-most-$r$ set $\mathcal{R}_r$, which has Lebesgue measure zero in the ambient matrix space.

The loss gap between the optimal full-rank solution $W^\star$ and its best rank-$r$ approximation $W_r^\star$ is controlled by the tail singular values. Rapid spectral decay means small rank-induced bias.

In practice, the learned $W = AB^\top$ may not achieve the optimal rank-$r$ approximation $W_r^\star$. The total error decomposes into two additive components:

How far the trained factorization is from the optimal rank-$r$ solution. Reducible with better optimization — not a fundamental limitation.

The unavoidable error from restricting to rank $r$. Small when layer spectra decay quickly — which modern networks exhibit.

Shared latent factors induce non-zero covariance between weight entries, even though the factor posterior itself is mean-field. Rank $r$ controls how expressive these correlations can be.

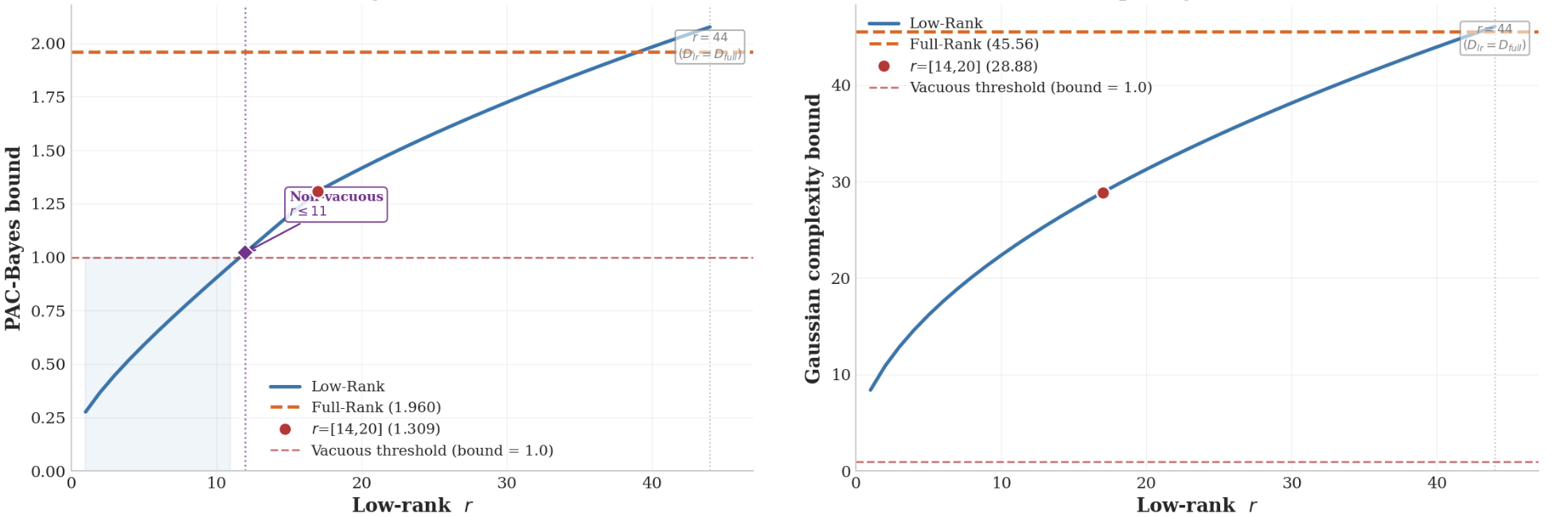

The paper provides three theory certificates. Each addresses a distinct aspect of the low-rank posterior's behaviour.

The induced posterior is singular w.r.t. Lebesgue measure on the ambient weight space.

Low-rank posteriors reduce the dominant complexity scaling term when $r \ll \min(m,n)$.

The BNN predictive mean lies in the closed convex hull of the support class. Gaussian complexity is invariant under convex hull and closure, so deterministic complexity bounds transfer to Bayesian predictive means.

The loss gap between the optimal full-rank $W^\star$ and its best rank-$r$ approximation $W_r^\star$ (both defined in §04) is controlled by the tail singular values. Rapid spectral decay means small rank-induced bias.

Full-rank MFVI complexity scales as $O(mn)$; low-rank scales as $O(r(m+n))$. The ratio $\mathrm{Complexity}(Q_\mathrm{LR})/\mathrm{Complexity}(Q_\mathrm{full}) \approx \sqrt{r(1/m + 1/n)} \ll 1$ when $r \ll \min(m,n)$. The bound is non-vacuous for low ranks where full-rank BNNs already fail.

The BNN predictive mean lies in $\overline{\mathrm{conv}}(\mathrm{supp}\,q_W)$. Since Gaussian complexity is invariant under convex hull and closure, the deterministic low-rank complexity bound $\mathcal{G}(F^{\mathrm{Pinto}(C,r)})$ transfers directly to the Bayesian predictive mean — linking frequentist generalization theory to the Bayesian low-rank posterior.

As argued by Wilson (2020), generalization depends on the support and inductive biases of the posterior. Mean-field and SBNN impose fundamentally different biases.

Treats each weight as a freely adjustable parameter. Updating $w_{ij}$ only touches that entry — local memorization is possible. The posterior has positive density everywhere in $\mathbb{R}^{m\times n}$, imposing no structural constraint on which weight configurations are reached.

Restricts posterior support to $\mathcal{R}_r$. Updating $W_{ij} = \sum_k A_{ik}B_{jk}$ requires modifying shared factors that affect entire rows and columns simultaneously. This prevents local memorization and enforces coherent uncertainty propagation across connected weights.

For $m=n=512$ (relative complexity vs full-rank MFVI, lower is better):

The rank-$r$ manifold is not an arbitrary bottleneck — it is a structured approximation class supported by the empirical spectral structure of learned neural network weights.

Weight matrices in trained neural networks routinely exhibit fast singular value decay: a small number of dominant directions captures most of the representational capacity. This motivates the rank-$r$ manifold as a natural approximation class rather than an artificial constraint.

The Eckart–Young–Mirsky bound controls rank approximation error through tail singular values $\sigma_{r+1}^2(W^\star) + \cdots$. When layer spectra decay quickly, these tails are small, and the rank-$r$ bias is genuinely negligible.

Experiments include SVD-initialized vs. randomly initialized low-rank factors, and rank ablations across architectures.

Ranks are chosen per-architecture based on empirical validation: $r=15$ for the MLP on MIMIC-III, $r=14/20$ for the LSTM on Beijing PM₂.₅, and $r=16$ for the Transformer on SST-2. Adaptive rank selection via sparse priors is a natural future direction.

Move the rank slider to compare full-rank and low-rank parameterization for a single $256 \times 256$ layer.

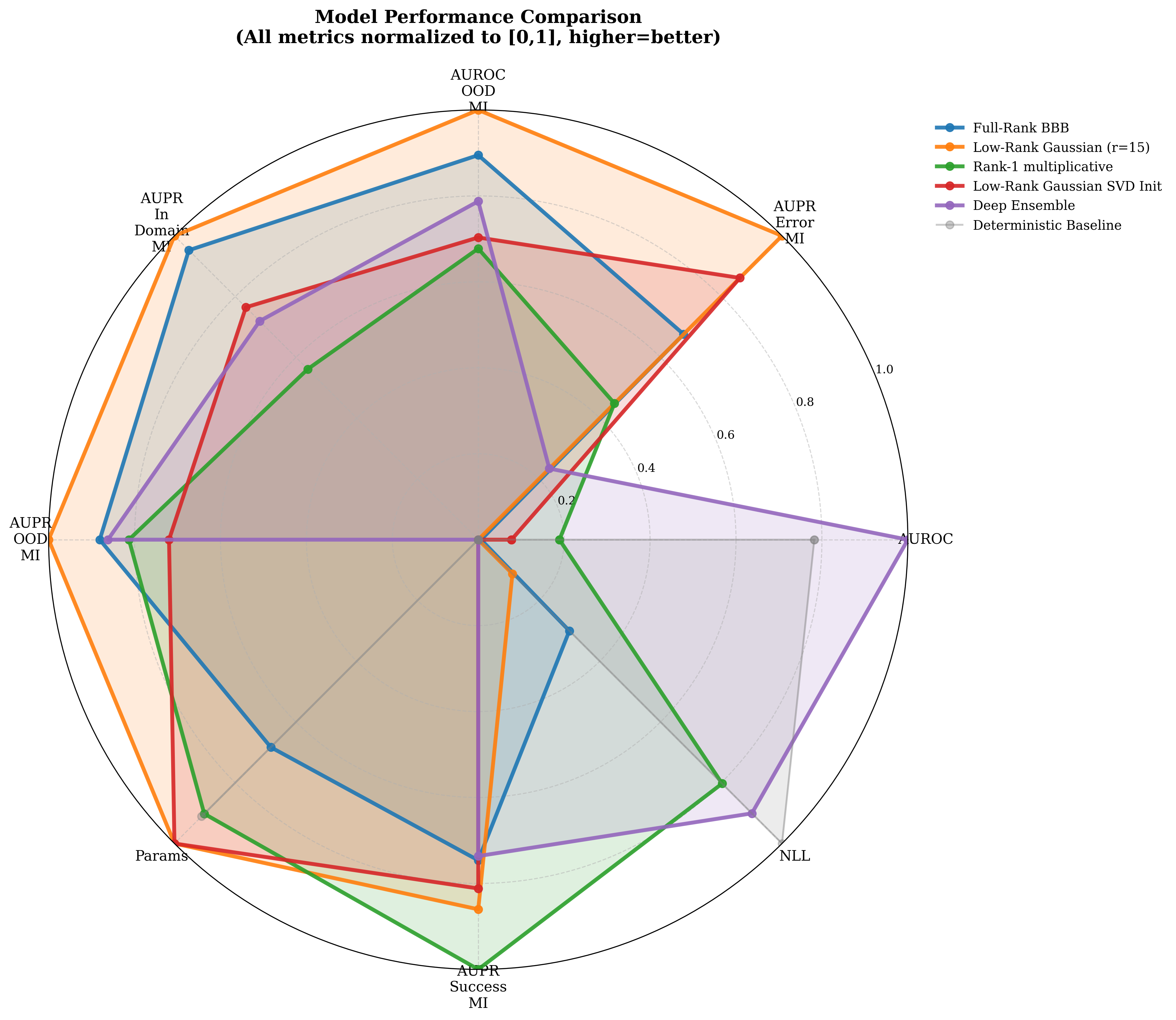

Three architectures. Three domain-shift scenarios. Six uncertainty baselines. Each setting includes OOD evaluation on held-out distribution shifts.

| Model | AUROC ↑ | AUPR-Err ↑ | AUC-OOD ↑ | AUPR-OOD ↑ | AUPR-In ↑ | NLL ↓ | Params ↓ |

|---|---|---|---|---|---|---|---|

| Deterministic | .922 | .145 | .500 | .544 | .456 | .284 | 22.4k |

| Deep Ensemble | .929 | .237 | .738 | .754 | .721 | .300 | 112k |

| Full-Rank BBB | .895 | .412 | .770 | .759 | .807 | .401 | 44.8k |

| Low-Rank BBB | .895 | .540 | .802 | .788 | .824 | .433 | 13.6k |

Best OOD detection metrics in the clinical-shift setting, with 70% fewer parameters than Full-Rank BBB.

Multi-seed averaged uncertainty metrics — MIMIC-III.

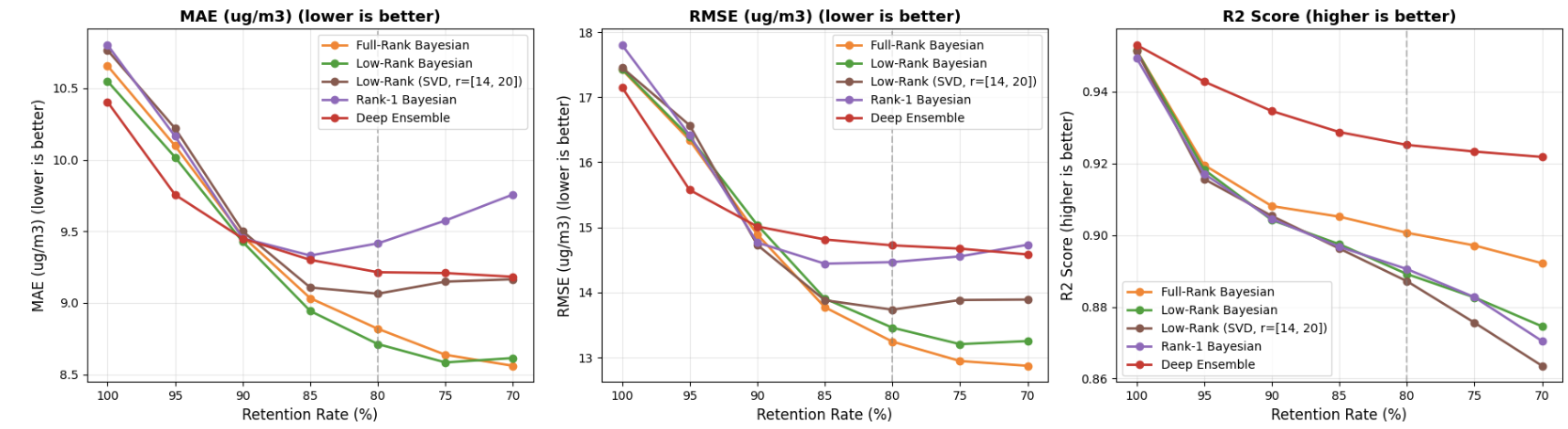

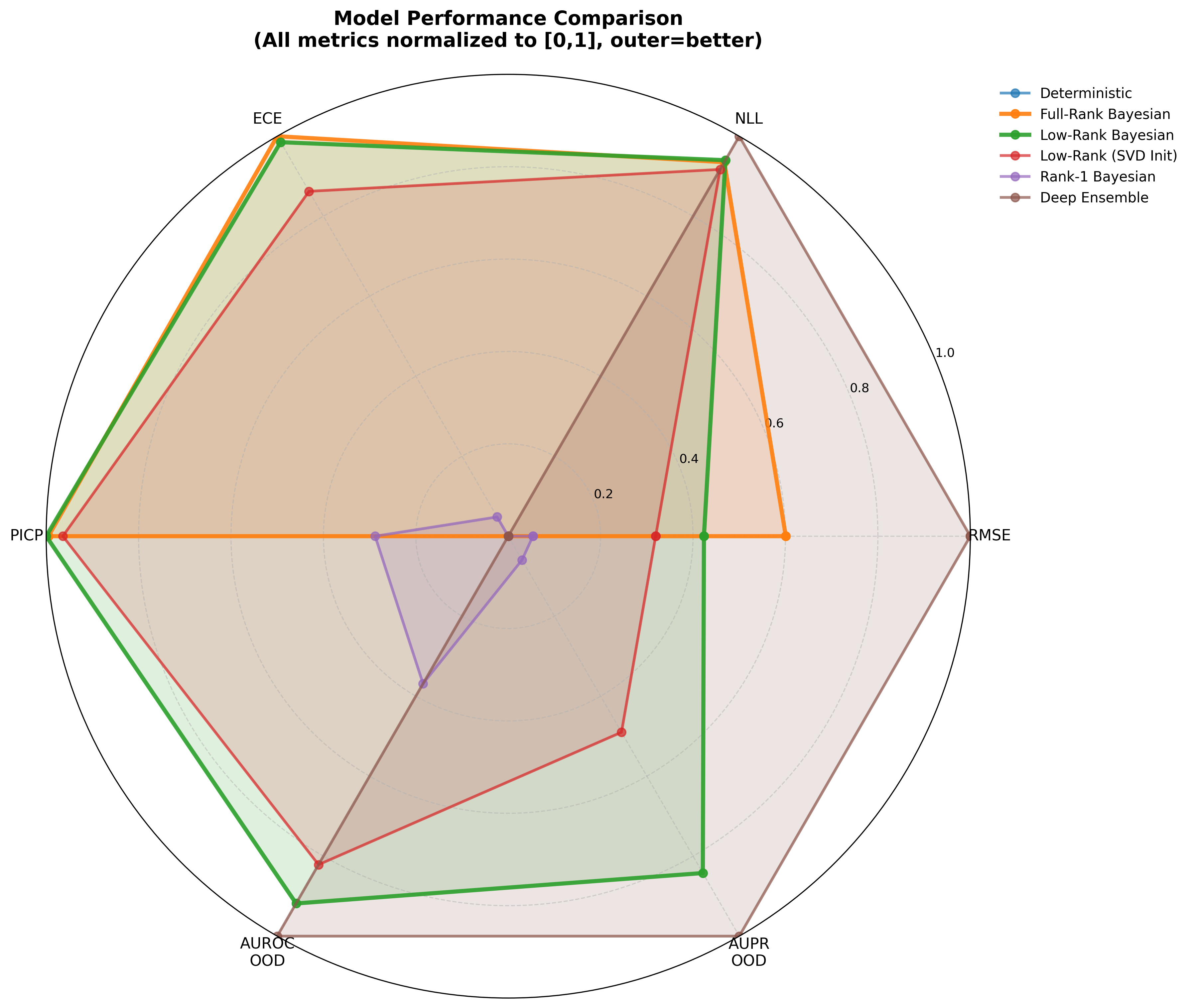

| Model | MAE ↓ | ECE ↓ | PICP ↑ | AUROC-OOD ↑ | AUPR-OOD ↑ | Params ↓ |

|---|---|---|---|---|---|---|

| Deterministic | 10.79 | — | — | .500 | .500 | 33K |

| Full-Rank BBB | 10.55 | .111 | .788 | .492 | .743 | 132K |

| Low-Rank BBB | 10.63 | .114 | .790 | .710 | .861 | 47K |

| Deep Ensemble | 10.45 | .317 | .310 | .730 | .883 | 330K |

Best coverage (PICP) among Bayesian methods. At 80% retention, Low-Rank BBB achieves MAE 8.71 vs 9.21 for Deep Ensemble — structured correlations improve selective prediction quality.

Selective prediction: Low-Rank achieves lower MAE at each retention threshold.

Uncertainty metric radar — LSTM · Beijing PM₂.₅.

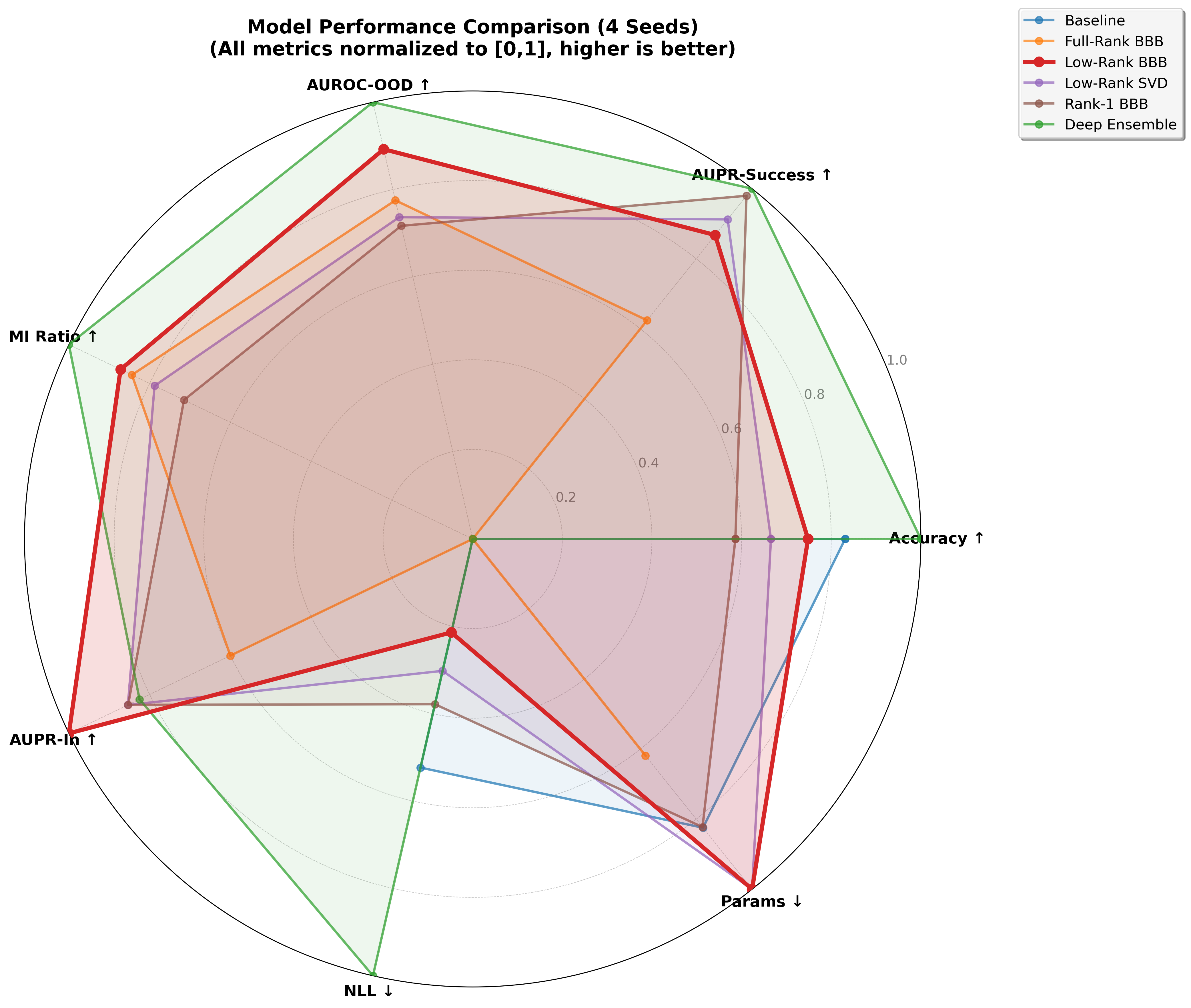

| Model | Acc ↑ | NLL ↓ | AUROC-OOD ↑ | MI Ratio ↑ | AUPR-In ↑ | Params ↓ | Time |

|---|---|---|---|---|---|---|---|

| Deterministic | .812 | .490 | .500 | .000 | .102 | 9.9M | 7.7 min |

| Deep Ensemble | .825 | .434 | .657 | 1.55 | .267 | 49.6M | 64.7 min |

| Full-Rank BBB | .752 | .552 | .622 | 1.31 | .222 | 19.8M | 23.1 min |

| Low-Rank BBB | .806 | .527 | .640 | 1.35 | .302 | 1.5M | 8.2 min |

Best AUPR-In and second-best AUROC-OOD. Trains in 8.2 min vs 64.7 min for Deep Ensemble.

Aggregated uncertainty radar over 4 seeds — SST-2 Transformer.

SWAG is a credible scalable posterior. It does not overturn the low-rank quality–efficiency tradeoff: on SST-2 it uses 208M parameters vs 1.47M; on MIMIC-III Low-Rank leads on MI-based OOD (0.802/0.788 vs 0.634/0.680).

| Setting | SWAG strength | Low-rank advantage |

|---|---|---|

| SST-2 | Accuracy tied (0.808 vs 0.806) | 1.47M params vs 208M; better AUPR-In |

| MIMIC-III | Higher in-domain AUROC / NLL | OOD MI: 0.802/0.788 vs 0.634/0.680 |

| Beijing PM₂.₅ | Higher raw coverage | Narrower intervals; stronger OOD detection |

A consistent pattern across all three tasks: the rank constraint trades predictive sharpness (NLL) for broader epistemic uncertainty, benefiting OOD detection, abstention, and coverage.

Low-rank improves OOD detection despite weaker NLL than Deep Ensembles. Structured uncertainty outperforms under clinical domain shift.

Deep Ensemble is stronger on NLL and OOD. Low-rank remains competitive at 33× fewer parameters — an entirely different efficiency regime.

Low-rank achieves best calibration, coverage, and selective prediction. OOD advantage is secondary to interval quality.

Result · Low-Rank Ensembling

Ensembling five low-rank members further improves every uncertainty metric, demonstrating that the two techniques stack. This opens a practical design space: smaller, cheaper ensembles of low-rank members can match or exceed a full-rank Deep Ensemble at a fraction of the parameter count.

The empirical message is not compression alone, and not that low-rank wins every metric.

OOD separation on MIMIC; coverage and selective prediction on Beijing; efficiency-adjusted uncertainty on SST-2.

In-distribution likelihood and predictive sharpness can favour Deep Ensembles. SWAG is a credible high-parameter baseline in some settings.

Trustworthy AI needs useful uncertainty under shift and deployment constraints, not only marginal NLL. SBNNs make that goal practical at scale.

Four decisions govern how well SBNN works in practice. Here is what the paper's experiments teach about each.

Run ablation studies with reduced budget first — fewer epochs and fewer MC samples during validation. This is the primary method; no pretrained weights needed.

When a deterministic baseline already exists, use its singular value decay (SVD of trained weight matrices) as optional validation: rapid decay justifies the chosen rank. For SST-2, the embedding layer (70% of parameters) shows particularly fast decay.

Selected ranks in the paper: r=15 for MIMIC-III MLP, r=14/20 for Beijing LSTM, r=16 for SST-2 Transformer.

Start with β = 1/N_batches or β = 1/N_train. Higher β pushes the posterior toward the prior, improving OOD detection but degrading in-distribution NLL — tune based on which matters more for your task.

Always use KL annealing: ramp β from 0 to full over the first ~20 epochs. This lets the model find a good likelihood basin before the regularization penalty engages — especially important for LSTMs and Transformers where early KL dominance prevents learning entirely.

Only use SVD warm-starting when a pretrained deterministic model already exists and comes for free. Results: modest gains on specific metrics (MIMIC AUROC: 0.898 vs 0.895; SST-2 AUPR-Succ: 0.923 vs 0.917), but inconsistent and task-dependent.

On Beijing LSTMs, random initialization outperforms SVD overall. The improvement does not justify training an additional deterministic model if one is not already available.

All layers are drop-in Keras replacements — no other code changes needed. For LSTMs: forget gate bias = 1.0 and use weight caching (sample once per batch, reuse across time steps). For Transformers: the embedding layer alone is 70% of parameters; factorizing it with the sparsity trick gives the largest single efficiency gain.

At small scales (MLP, small LSTM), parameter reduction translates to memory savings, not wall-clock speedup — two matrix multiplications vs one. Efficiency gains emerge at transformer scale.

Two supplementary experiments corroborate the main findings at small scale: a controlled MNIST study and a toy regression showing epistemic uncertainty behavior OOD.

4-layer MLP ($784 \to 1200 \to 1200 \to 10$, ReLU). Same scale-mixture prior. 50 MC samples at test time. Low-Rank uses $r=25$ for hidden layers. Low-Rank Laplace achieves the best NLL of all variants.

Calibration gap is only 0.002–0.0035 ECE in absolute terms across binning schemes — practically negligible for a 19.5× parameter reduction. Low-Rank Laplace achieves NLL 0.0607 vs 0.0795 for Full-Rank, suggesting heavier-tailed factor posteriors improve likelihood estimation.

MLP $1\to100\to100\to1$ (tanh). Train on $x\sim\text{Unif}[-0.1,\,0.6]$, evaluate on $x\in[-0.5,\,1.5]$. Low-Rank uses $r=16$ for the hidden layer — 65% fewer parameters.

Low-Rank maintains a wider absolute OOD uncertainty band (IQR 0.094 vs 0.048), providing more conservative credible intervals outside training support. The OOD/in-domain expansion ratio (2.01× vs 1.90×) confirms qualitative epistemic sensitivity is preserved — uncertainty grows when leaving the training domain, even in-domain uncertainty is higher, reflecting the rank constraint's regularizing effect.

This work introduces SBNNs through the measure-theoretic singularity of the induced posterior. The singular posterior geometry provides both theoretical foundations and practical benefits for uncertainty quantification, making low-rank factorization a principled path toward scalable Bayesian deep learning. Several natural extensions open from here:

Ranks chosen by spectra, validation tradeoffs, or sparse priors (spike-and-slab) rather than fixed grids. Per-layer rank scheduling could further reduce parameter count.

Extending to large language models and vision transformers. The $O(r(m+n))$ scaling makes this far more tractable than full-rank Bayesian inference.

Laplace, spike-and-slab, and richer factor distributions while keeping singular support. Non-Gaussian factors can represent multi-modal posterior landscapes.

Uncertainty that supports abstention, calibrated coverage, OOD awareness, and constrained deployment in clinical decision support and autonomous systems.

@inproceedings{toure2026singular,

title = {Singular Bayesian Neural Networks},

author = {Toure, Mame Diarra and Stephens, David A.},

booktitle = {International Conference on Machine Learning},

year = {2026}

}